One of Apple’s apparent AI ambitions is to enable an on-device chatbot which can run on iPhones, rather than using servers to do the processing. This would be capable of accessing data stored on your iPhone, as well as boosting privacy …

Nvidia has taken the same approach, with a chatbot that runs on a Windows PC – naturally, one equipped with one of the company’s own high-end GPUs.

Apple working on on-device chatbot for iPhone

An Apple research paper published late last year seemed to point to the company enabling an on-device chatbot to run on an iPhone.

The paper is about minimizing the amount of data which needs to be transferred from flash storage to RAM. LLMs is the generic term for AI chat systems that have been trained on large amounts of text.

This approach enables LLMs to run up to 25 times faster on devices with limited RAM.

While the paper doesn’t reveal any specifics about Apple’s plans, there have been increasing indications that iOS 18 is the point at which the company will finally launch a far more powerful version of Siri, using the type of generative AI tech dubbed AppleGPT. The next version of iOS, set to be unveiled at WWDC in June, is said to be the biggest update in the company’s history.

Nvidia on-device chatbot

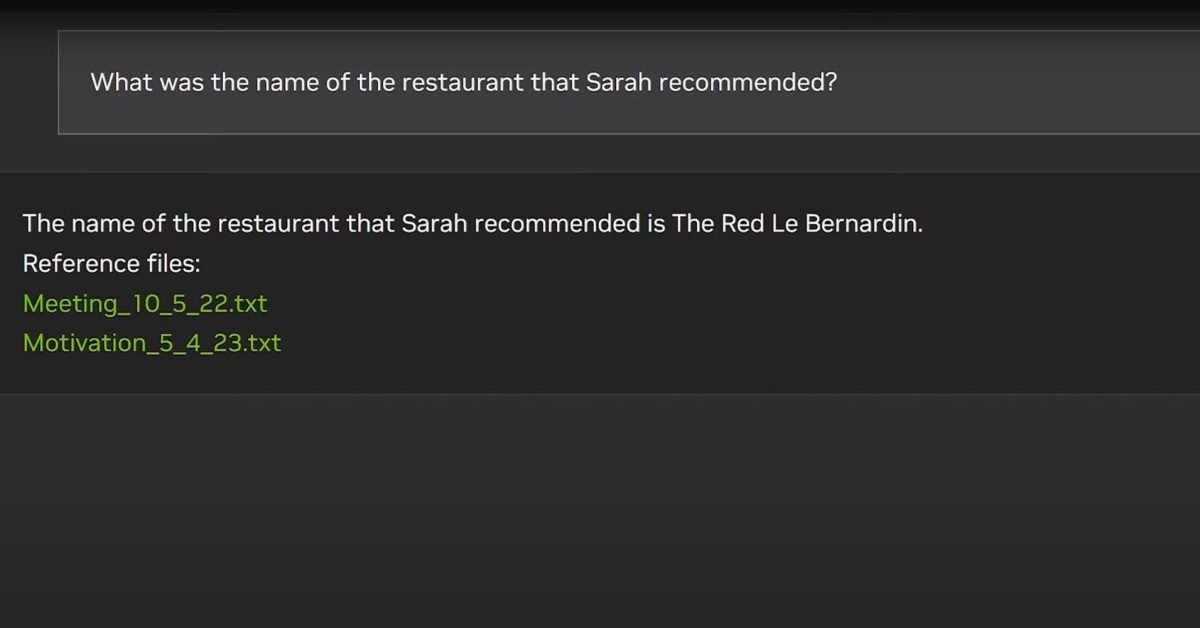

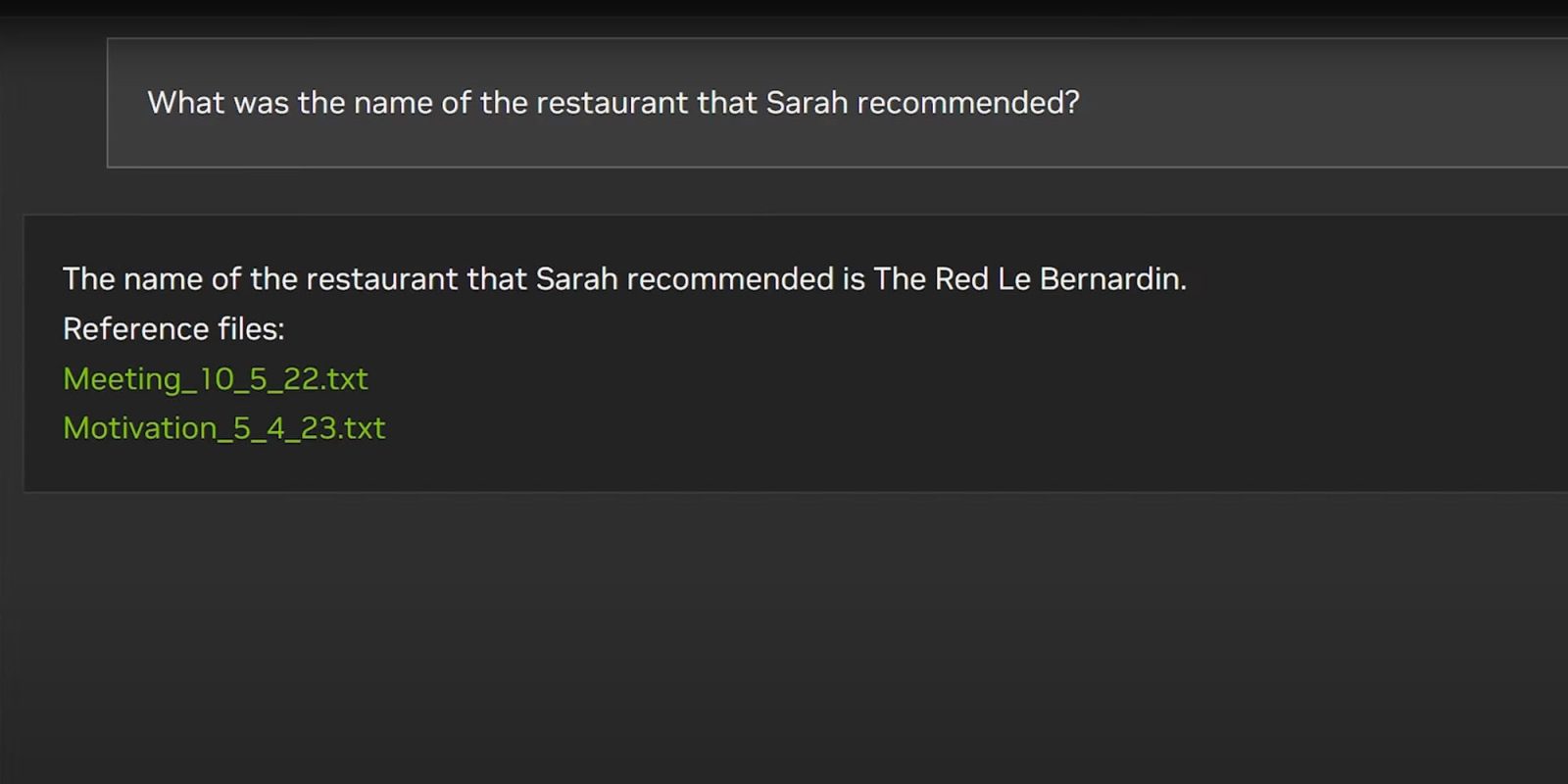

Nvidia has already created a demo version of one on-device chatbot, albeit one which requires a Windows PC with one of the company’s own premium GPU cards.

Chat With RTX is a demo app that lets you personalize a GPT large language model (LLM) connected to your own content—docs, notes, videos, or other data. Leveraging retrieval-augmented generation (RAG), TensorRT-LLM, and RTX acceleration, you can query a custom chatbot to quickly get contextually relevant answers. And because it all runs locally on your Windows RTX PC or workstation, you’ll get fast and secure results […]

Chat with RTX supports various file formats, including text, pdf, doc/docx, and xml. Simply point the application at the folder containing your files and it’ll load them into the library in a matter of seconds. Additionally, you can provide the url of a YouTube playlist and the app will load the transcriptions of the videos in the playlist, enabling you to query the content they cover.

Your Windows machine will need an NVIDIA GeForce RTX 30 or 40 Series GPU, or an RTX Ampere or Ada Generation GPU with at least 8GB of VRAM.

ChatGPT may finally remember you

In other AI news, ChatGPT is getting a memory – at least, for a small number of users.

One of the limitations of ChatGPT to date has been that each session runs in isolation – that is, it won’t remember anything you did in previous sessions.

OpenAI is now testing a version which can remember certain data and preferences, for a more coherent experience over time.

We’re testing memory with ChatGPT. Remembering things you discuss across all chats saves you from having to repeat information and makes future conversations more helpful.

You’re in control of ChatGPT’s memory. You can explicitly tell it to remember something, ask it what it remembers, and tell it to forget conversationally or through settings. You can also turn it off entirely […]

ChatGPT’s memory will get better the more you use it and you’ll start to notice the improvements over time. For example:

- You’ve explained that you prefer meeting notes to have headlines, bullets and action items summarized at the bottom. ChatGPT remembers this and recaps meetings this way.

- You’ve told ChatGPT you own a neighborhood coffee shop. When brainstorming messaging for a social post celebrating a new location, ChatGPT knows where to start.

- You mention that you have a toddler and that she loves jellyfish. When you ask ChatGPT to help create her birthday card, it suggests a jellyfish wearing a party hat.

- As a kindergarten teacher with 25 students, you prefer 50-minute lessons with follow-up activities. ChatGPT remembers this when helping you create lesson plans.

The company says that it’s being trialled with a small number of users this week, and that it will share wider rollout plans once it has assessed the results.

FTC: We use income earning auto affiliate links. More.

nvidia-previews-on-device-chatbot-in-approach-favored-by-apple